How AI Song Maker Changes Early Music Decisions

Most music ideas do not fail because people lack feeling. They fail because the distance between feeling and form is too large. Someone may know they want a song that sounds restless, warm, cinematic, or intimate, yet turning that instinct into lyrics, melody, arrangement, and a usable file is a different challenge altogether. That is where AI Song Maker becomes worth examining. Its value is not simply that it generates music, but that it reduces the number of creative bottlenecks between intention and output.

What makes the platform notable is that it does not frame music creation as a single dramatic moment of inspiration. Instead, it presents it as a series of smaller decisions: whether to start from a prompt or from lyrics, whether to prioritize speed or control, whether to generate a rough sketch first or aim directly at a polished result, and whether to stop at the first song or continue into editing tools like vocal separation and stem splitting. In that sense, the platform feels less like a gimmick and more like a system for helping people move through the first half of the music-making process.

The interesting question, then, is not whether AI can “make songs now.” That part is already visible. The more useful question is how a tool like this reorganizes creative work. In my view, AISong is most revealing when it is treated as a decision engine. It helps users make choices earlier, compare options faster, and arrive at something coherent before technical complexity gets in the way.

Table of Contents

Why The Earliest Creative Stage Usually Breaks Down

The beginning of a song is often the most fragile point. At that stage, nothing is stable yet. A theme may exist, but not the lines. A mood may exist, but not the instrumentation. A rhythm may exist in the creator’s head, but not in a form that another person or program can interpret clearly.

Traditional music production asks users to translate those fragments into multiple specialized layers. Writing, arranging, voicing, and production each require their own habits. For trained musicians, this can be exciting. For everyone else, it is often the point where an idea stalls.

AISong appears designed around that exact problem. The platform reduces the pressure of starting correctly. You do not need to arrive with a finished composition. You can arrive with a scene, a phrase, a style reference, or a lyric draft. From there, the system can turn partial creative material into a more complete musical direction.

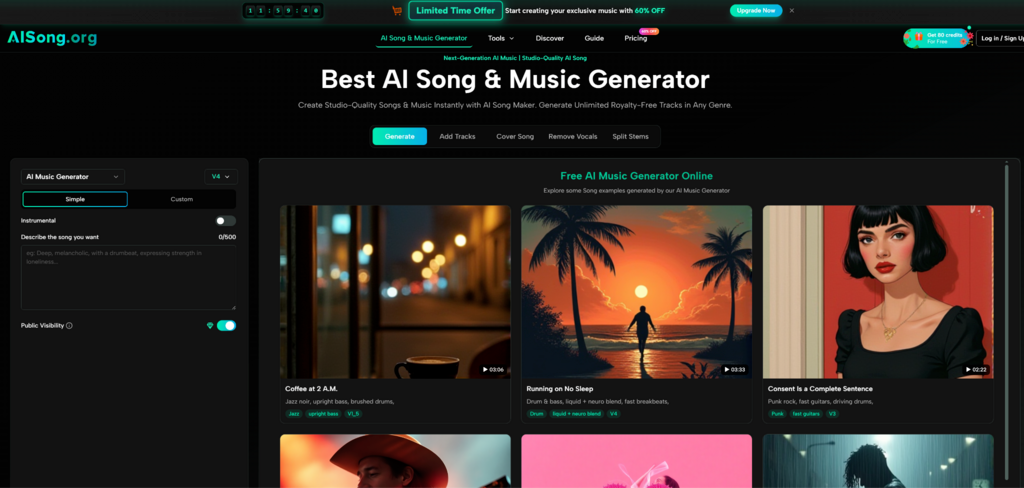

Prompt Based Creation Removes Technical Friction

One of the clearest examples of this is Simple Mode. The user can describe the kind of song they want in natural language, and the system handles the rest. That includes the lyrics, melody, instrumentation, and vocals. In practical terms, this means the first requirement is not technical knowledge but clarity of intent.

For casual creators, this lowers the entry barrier dramatically. For experienced creators, it can still be useful as a fast ideation tool. Either way, the mode reframes composition as communication rather than production.

Lyric Based Creation Preserves Narrative Control

Custom Mode changes the relationship between the user and the system. Instead of letting the platform invent everything, the user can guide the song more deliberately by entering lyrics or generating them from themes and keywords. This is important because lyrics are often where identity, tone, and storytelling live.

A melody can be adjusted later. A mix can be cleaned up later. But once a song’s emotional language feels wrong, the whole piece often feels wrong. That is why lyric control matters. It gives users a way to anchor the song before the platform begins interpreting style and arrangement.

How AISong Structures Creative Choices Clearly

AI Song Generator becomes easier to understand once you stop seeing it as a single generator and start seeing it as a sequence of controlled trade-offs.

Step One Sets The Starting Material

The first decision is what kind of input you want to give. Some users will begin with a broad text description. Others will bring in lyrics, title ideas, or structured sections such as verses and choruses. This step matters because it determines how much of the song will be invented by the system and how much will be defined by the creator.

Step Two Matches Output Quality To Creative Intent

The second decision is model selection. Different model tiers change the balance between cost, speed, and polish. A lighter model may be enough for exploration, while a stronger model may be more suitable when the goal is to turn a promising concept into a presentation-ready track.

This is not just a pricing issue. It changes creative behavior. When users know they can sketch cheaply and then refine selectively, they are more likely to experiment early instead of overcommitting to the first idea.

Step Three Tunes The Personality Of The Song

Advanced settings such as vocal gender, style weight, and weirdness constraint are subtle but meaningful. They suggest the platform is not only about generating music quickly but about helping users steer the personality of the result.

Style Weight Shapes Obedience And Flexibility

Style Weight appears to control how strictly the output follows the written prompt. This matters when users need either exact alignment or a wider range of interpretation. A brand team making music for a campaign may want more precision. A creator looking for unexpected inspiration may prefer more freedom.

Weirdness Constraint Defines Creative Risk

The weirdness setting points to another useful distinction. Not every creator wants the same level of novelty. Some want recognizable, dependable structure. Others want the system to push into more unusual territory. By exposing that choice, the platform acknowledges that “better” is not always the same as “more surprising.”

Why Regeneration Becomes A Creative Method

One of the more important details in AISong’s workflow is the regenerate function. On the surface, it looks like a convenience feature. In practice, it changes how the whole platform can be used.

Instead of treating the first output as a pass or fail event, users can hold the same lyrical and stylistic foundation and ask for alternative realizations. That is valuable because many music decisions are comparative. It is easier to recognize the right version after hearing three interpretations than by imagining perfection in advance.

For this reason, regeneration is not merely corrective. It becomes part of the composition process itself. The creator begins to work by selecting from variants, noticing which emotional details feel strongest, and then narrowing toward the version that fits the purpose best.

Iteration Replaces Perfection At The Start

This creates a healthier expectation around AI music. The point is not always to get the perfect song immediately. The point is to get to a meaningful set of musical directions quickly enough that taste and judgment can take over. In many creative workflows, that is already a major gain.

Why The Editing Tools Change The Platform’s Role

AISong becomes more compelling when viewed beyond the initial generation screen. The built-in tools for removing vocals, splitting stems, and adding tracks suggest that the song is not meant to remain frozen in its first output form.

Stem Splitting Makes Songs More Reusable

Stem extraction matters because it turns a finished-looking song back into editable parts. That opens new uses. A creator may want to isolate vocals, study arrangement layers, create alternate edits, or bring selected components into another production environment. Even if the first mix is not ideal, the separated structure can still be valuable.

Track Addition Supports Incomplete Ideas

Add Tracks points to another practical use case. Some users have a vocal concept but not the instrumental frame. Others have an instrumental bed but need vocal content. A tool that can bridge both directions is more adaptable than one that only starts from text. It allows partially developed ideas to become more complete without forcing users to begin again from zero.

Where This Platform Makes The Most Sense

The clearest use cases seem to be creators who need movement more than perfection. Video editors, social media teams, indie artists, podcast producers, and marketers often need songs that are emotionally aligned, structurally complete, and fast to produce. They may not need studio-level originality every time. They need usable material now, and they need enough control to revise when necessary.

That is where AISong appears strongest. It turns vague creative demand into something concrete enough to review, revise, and repurpose.

Which Capabilities Matter Most For Understanding It

| Creative Need | Platform Response | Why It Matters |

| Start from an idea only | Simple prompt based generation | Reduces entry barriers for non musicians |

| Keep control over words | Custom lyric based generation | Preserves tone and narrative direction |

| Explore before polishing | Multiple model tiers | Supports draft first, refine later workflows |

| Compare alternatives | Regenerate function | Encourages selection through variation |

| Edit internal components | Vocal removal and stem splitting | Extends value beyond first generation |

| Complete partial concepts | Add Tracks tools | Helps bridge unfinished music ideas |

What The Platform Still Cannot Decide For You

Even with all these tools, the hardest part of music remains human. The system can offer structure, speed, and variety, but it cannot fully determine what emotional register best fits a scene, a message, or a creative identity. Users still need to decide whether a song feels sincere, whether the wording fits the intended audience, and whether the musical energy matches the purpose.

That is why I would not describe AISong as an automatic replacement for music creation. It feels more accurate to describe it as an accelerator for early judgment. It shortens the route from uncertainty to options, and from options to selection.

Human Taste Still Determines The Best Version

A useful output is not always the most technically impressive one. Sometimes the right track is simply the one that leaves the correct emotional residue. Platforms like AISong can generate possibilities quickly, but the final sense of fit still comes from human listening.

The Real Shift Is Operational, Not Magical

That is the broader significance of the platform. It does not remove the need for taste, but it reorganizes when taste enters the process. Instead of struggling for hours just to produce a first demo, users can reach the listening and editing stage much sooner. That shift alone changes who gets to make music ideas tangible and how often they can try.